In a recent article in Annals of Surgery, a research team from Massachusetts General Hospital and MIT details the ways in which artificial intelligence (AI) could revolutionize the practice and teaching of surgery—and how patients will benefit with safer surgeries and better outcomes.

Blog

Erica Shenoy, MD, PhD For patients in hospital and healthcare settings, a Clostridium difficile (C. difficile) infection is a complication that can result in serious complications and even death. C. difficile is caused by a bacterium and the symptoms of infection include diarrhea, fever and severe abdominal cramps. While some cases may be mild, some can beRead more

45654786 – close up 3d illustration of microscopic cholera bacteria infection Researchers from Massachusetts General Hospital, Duke University and the International Centre for Diarrheal Disease Research in Dhaka, Bangladesh, have used machine learning algorithms to find patterns within communities of bacteria living in the human gut. These patterns could indicate who among the approximately oneRead more

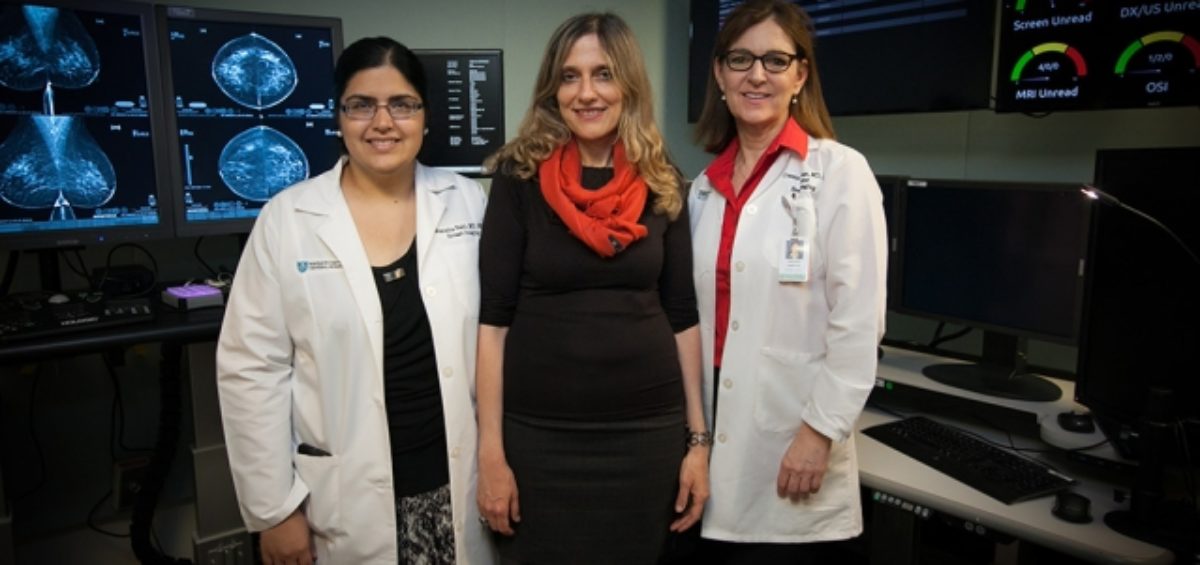

Imagine enduring a painful, expensive and scar-inducing surgery—only to find out afterwards that it wasn’t necessary. This is the situation for many women with high-risk breast lesions.

Researchers from MIT and Mass General recently unveiled a wireless, portable system for monitoring individuals during sleep that could provide new insights into sleep disorders and reduce the need for time and cost-intensive overnight sleep studies in a clinical sleep lab.

A recap of our second annual Art of Talking Science Competition, which focused on AI and machine learning.

There’s so much more to artificial intelligence (AI) than what you’ve seen in sci-fi movies. In fact, advancements in machine learning could provide new opportunities for medical research and diagnosis.